2018 is the year of the objective function.

The zeitgeist of human culture elevates certain ideas to our collective consciousness. We have ways of perceiving the world that shape politics, consumer mindset, public policy, and art. And this year, I think, we’ll start talking about objective functions.

Every AI has a goal

We’ve spent decades collecting data. Usually, the amount we collect is simply too much to go through by hand; in fact, that’s a pretty workable definition of the term “Big Data.” That means we have to rely on algorithms to make sense of it for us.

The field of statistics is about making sense of numbers, algorithmically. We can use algorithms to identify a trend in data, or to guess at an underlying function using regression. We can try to find similar groups in the data using clustering. All of these give us potential insights into the things the data is about.

Finding clusters in data. By Chire (Own work) at https://commons.wikimedia.org/wiki/File:K-means_convergence.gif

More recently, we started using algorithms to analyze text. We can guess at the sentiment in a document, based on whether it has positive or negative words. We can try to summarize it by analyzing language structure. This is a less-perfect science, because algorithms are bad at things like sarcasm.

There’s a big difference afoot, however. The algorithms on which we relied were made by humans: Statisticians, SQL developers, and business analysts. In recent years, we’ve figured out how to design software that creates its own algorithms. This is the field of machine learning (or what some would call simple AI.)

Machine learning requires two things: A data set, and an objective. If you want to create an algorithm that’s good at playing a video game, you feed it the screens of the video game and its goal. The algorithm will ruthlessly, mercilessly run through trial-and-error evolution, culling offspring that don’t play the game well and reproducing those that do.

Alphazero beat every other piece of chess software by playing games, seeing who won, and ruthlessly culling the losers over and over again. Also no fun at parties. (Photo by Luiz Hanfilaque on Unsplash)

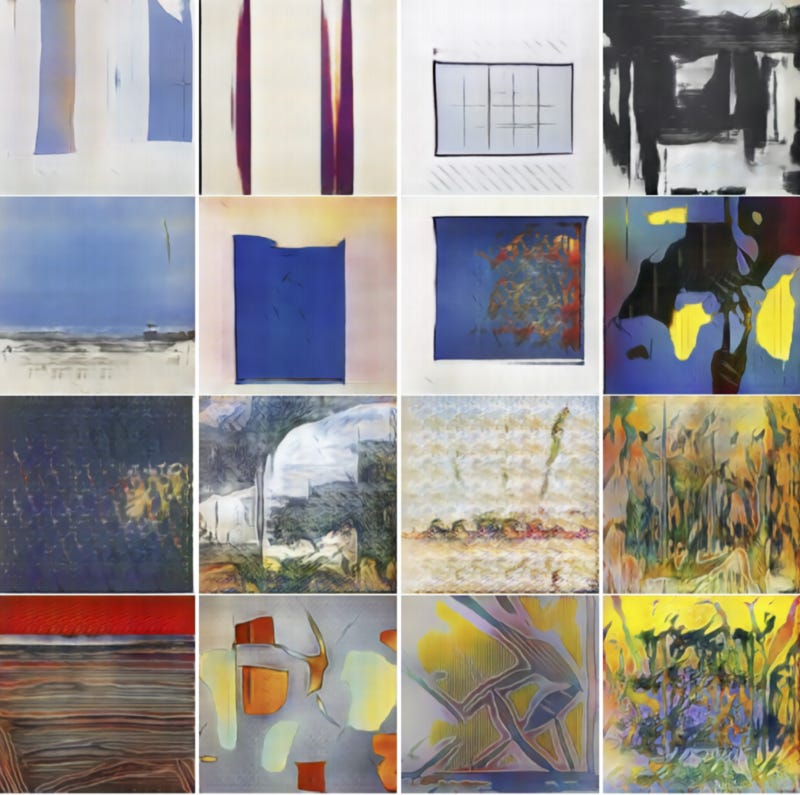

Other machine learning approaches have different goals. In a Generative Adversarial AI, two pieces of software with competing goals work against one another. The first might try to create fake poetry that looks like a human wrote it, having trained itself based on books of human-written poems. The other might try to separate real from fake human poetry, having been trained on the same data. The two algorithms are locked in battle, and the best either one can do is hope to win half the time.

This kind of adversarial AI has been used to make fake celebrities, or to create new kinds of art:

What happens when two algorithms fight about art. https://arxiv.org/pdf/1706.07068.pdf

In addition to a ravenous appetite for data, all machine learning has a goal of some kind. Developers call this the objective function: The thing the algorithm is trying to be amazing at.

Objective functions are all around us

Nature has an objective function, too. Every creature shaped by evolution has adapted, over eons, to be the most successful species in its niche. Passing genes down to offspring more successfully than others has been the guiding aim of all genes. As Dawkins observed in The Selfish Gene, it’s not that we have genes; it’s that genes have us — as tools for their continued survival, each of us an experiment in adaptation.

In capitalist liberal democracies, corporations have an objective function: Shareholder wealth. Tim O’Reilly says that in corporations we may have our first rogue AIs, exploiting every possible externality in mindless pursuit of greater returns and the concentration of wealth. Cory Doctorow and others think that popular culture’s portrayal of AIs is actually rooted in this irresistible, impersonal mindlessness with which global organizations act.

Recent political events have also shown us the objective functions of many of the communications platforms on which we rely. Some social networks have been slow to stop abuse and block bots because doing so undermines revenues. Others, focused on engagement, have amplified the kind of polarizing speech and disagreement that triggers our fight reflexes, keeping us glued to screens, convinced that the world needs to hear our opinions.

But are these the right goals? How do we balance private and public concerns, or the will of the many against the rights of the few? How do we maximize the efficiency of systems and avoid tragedies of the commons while still encouraging freedom, competition, and innovation?

We have a way to talk about them

Ethicist Nick Bostrom offers an eloquent example of AIs that have gone too far. Tell a superhuman AI that its job is to make paperclips, he argues, and it will turn the entire universe into a paperclip factory. The objective function isn’t just how algorithms work, it’s why they work. (Want to play a game about this? It’s amazing.)

Isaac Asimov’s laws of robotics were a prescient attempt to frame a set of ethical constraints for sentient machines. “No robot may harm a human,” he wrote, “or, through inaction, allow a human to come to harm.” Other rules for things like self-preservation took a lower priority, but together the rules formed a moral fabric for smart algorithms.

The bulk of his robot stories were explorations of the edge cases of these rules. For example, in one story (the Evitable Conflict) he theorized that world-scale AIs would formulate their own top-priority rule: Not harming humanity as a whole, even if that meant doing minor harm to specific individuals in order to achieve that goal.

There are more pressing ethical dilemmas even without sentient algorithms: Choosing which person to hit in the event of an accident, for example; or explaining decisions made by an algorithm at the expense of slowing down its performance and becoming less competitive.

The Trolley Problem is a classic example of an ethical dilemma with which machines will have to contend.

While these ethical examples might seem somewhat esoteric, the average person is quickly becoming aware that somehow, somewhere, the game is fixed. And they’re going to want to find out how.

One reason for this awareness is that as more and more decisions are made by machines, we’re getting unsatisfactory explanations from their masters: Why was welfare handled a certain way? Why did only a certain part of town get free deliveries, or more expensive hotels, or better pothole repairs? Why was one person paroled, and another sent back to jail? Why were police sent to a certain area? Why did a particular car fail emissions tests?

In case you think this is hyperbolic, recognize that these are all actual, widely reported data ethics incidents.

In the capitals of America and Europe, more examples of algorithms and automation hit the news on an almost daily basis. Citizens are increasingly aware they’re being played; consumers are now asking why they’re getting a particular offer, or seeing a specific message. The singular focus and unrelenting power of the objective function is coming home to roost.

We’re plastic

Almost every talk by a futurist or technology advocate contains the line, “the world is changing faster than ever before.” And that’s definitely got a ring of truth to it; the upgrade from atoms to bits, from physical to digital infrastructure, is fundamentally changing how the human species behaves.

But there’s good reason to believe we’ll manage. Humans have the largest brain-to-body ratio in nature. We’re born with the biggest brain possible (any larger and it would kill the mother) and then brain growth consumes the vast majority of our calories in our formative months. We’re built to learn and adapt.

Think about any science fiction movie you’ve seen featuring augmented reality. Characters look around them, and their whole world is overlaid with a heads-up display, telling them about their environment. It looks bewildering and foreign; a common trope is showing newcomers to these environments fumbling around, unable to handle them.

But nearly all of us has been through this before, and we managed just fine. When we learned to read and to understand signs, our reality was augmented. Words revealed our environments to us, helping with wayfinding, flagging dangers, identifying friends and sources of information, offering affordances.

So I think we’ll be just fine.

I’m not sure what the average person will call objective functions once the idea of them hits the mainstream. It’ll probably take the equivalent of Stephen Colbert’s “Truthiness” to christen the idea and launch it into the vernacular of public consciousness.

What’s a better word for “secretly evil goal”?

But businesses, platforms, and policymakers are about to realize that when you optimize within a dynamic system, the system adapts.

After all, that’s our objective function, and we were here first.